No one would question the importance of keeping customers satisfied. In a small company it is very evident if customers are dissatisfied. People complain directly to the proprietor. The situation is very different in a large company. Customers are dealt with by many different people. There are multiple touch points for any single customer which could cause dissatisfaction – the sales representation, the customer service team, the delivery people, the finance department and so on. The managers of the company undoubtedly have hundreds of customers, possibly scattered around the world, and the only way they can know for sure how satisfied they are is by carrying out a survey. This brings with it a number of potential problems and the survey itself is the least of these. Measuring customer satisfaction is easy compared to the task of implementing improvements.

Doing Is Harder Than Thinking About It

In my experience, too many customer satisfaction studies gather dust because there is no mechanism for turning the market research findings into tangible improvements. This White Paper addresses this subject and begins with a discussion on what customer satisfaction scores mean and concludes with a four-point plan for making improvements.

What Do Customer Satisfaction Scores Mean?

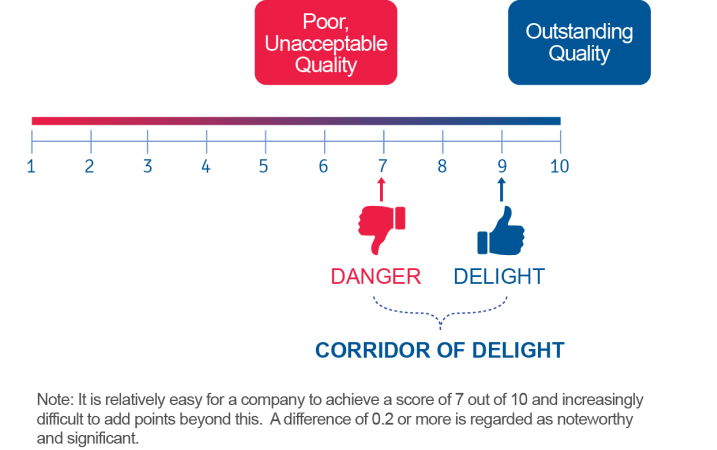

Most customer satisfaction surveys use numerical scales which measure levels of satisfaction. Respondents are asked to provide a score on their satisfaction with a supplier using a scale that runs from 1 (or 0) to 10, where 1 indicates total dissatisfaction and 10 is equal to total satisfaction. Ninety percent of all companies measuring their customer satisfaction achieve average scores in the range of 7 to 9. These scores between 7 and 9 are known as “the corridor of satisfaction”. On this 10 point scale, 8 is in practice the midpoint, not 5. This makes sense if you think about it. If you gave your dentist a satisfaction score of 5 out of 10, you almost certainly would be looking for a new one. We only do business with suppliers who provide good products or services and “good”, on a 10 point scale, begins at around a score of 7 for most people.

It is also fair to say that it is relatively easy to enter the corridor of satisfaction that runs between 7 and 9 and it is progressively difficult to increase the score once within it. Moving an average satisfaction score from 7 to 7.2 is much easier than moving it from 8.4 to 8.6. Because the corridor of satisfaction is quite narrow, a movement of 0.2 is significant.

It should be noted that overall satisfaction is normally asked as a separate question and is not calculated as an average of individual satisfaction scores given to different elements of a company’s product or service. This is because it is possible to have a relatively high score on individual components of an offer and a lower (or higher) score on overall satisfaction. A lower overall satisfaction score could reflect an image problem that a company is facing or it could arise from resentment because a company holds a monopolist position.

On the subject of customer satisfaction measurement, some people prefer “top box” scores rather than mean scores. In other words, the measures they find most useful for measuring and tracking satisfaction are the percentage of people who give a score 9 or 10 out of 10 (“top box”). This focuses attention on what really matters – the proportion of people that give an excellent rating – but for some companies it may mean they have little to work on if they have a lot of scores of 7 or 8 out of 10.

Market researchers often measure numerous aspects of products and services rather than keeping the survey at a high level and asking simply about overall satisfaction. This is because they are seeking to find out what feature of a company’s customer value proposition is driving overall satisfaction.

Companies do not live in isolation and their customer satisfaction score must be related to those achieved by competitors. A company with an overall satisfaction score of 6.5 out of 10 is at face value in a very poor position but if competitors have scores of only 5.5 or 6 out of 10, its relative position may not be so bad. We need therefore to understand how the company sits in its marketplace against direct competitors and other companies that may be used as a benchmark.

Even though our interest is in business to business markets, the buyers and specifiers that rate their suppliers enjoy an experience from a much wider range of products and services. If they visit John Lewis in the UK or Lord & Taylor in the US, they will receive a high quality of both products and services which inevitably will become benchmarks to judge old-fashioned, sloppy service from a business to business supplier. In other words, benchmarks by which we are judged exist everywhere, in every aspect of our life.

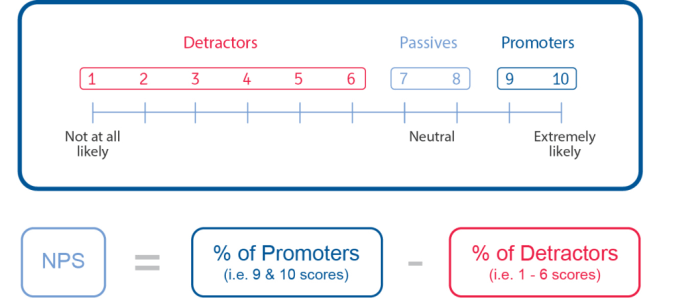

Customer satisfaction scores are not the only measure that is used in customer satisfaction surveys. The opportunity is also taken in most surveys to ask respondents the likelihood of them recommending a company and the answers to this question are used to compute its Net Promoter Score (NPS). Using a scale from 1 to 10 on the likelihood to recommend question, it is assumed that people who give a rating of 9 or 10 will be strong advocates or promoters of a supplier while those who give a score of 6 or below are potential detractors and would not recommend the company – they may even “rubbish” it. The subtraction of the percentage of respondents who give a score of 6 or below from those who give a score of 9 or 10 out of 10 produces the Net Promoter Score. It will be noted that scores of 7 or 8 out of 10 are not taken into consideration as they are deemed neutral.

The average Net Promoter Score for b2b companies is between 20% and 25%. It is not unusual to see negative Net Promoter Scores and it is rare that they consistently hit a high of 50%. It is widely believed that there is a strong correlation between a high Net Promoter Score and growth in market share and loyalty.

I suggest that there are four steps to improving customer satisfaction and loyalty.

4 Important Steps to Improving Customer Satisfaction

1. Identify Satisfaction Levels (And The NPS) And What Is Driving Them

The starting point for any improvement plan is to know the current level of customer satisfaction and what is driving it. Let us assume that a survey has been carried out and a company achieves an overall satisfaction score of 7.8 out of 10 and a Net Promoter Score of 24%. Further examination shows that the key drivers of the overall satisfaction score are the speed of response to enquiries, certain aspects of quality, and a general lack of innovation. It is determined from the survey results that any failings on any or all of these three factors have a marked effect on the overall satisfaction score for the company. Having invested in a customer satisfaction measurement program and arrived at an understanding of where the company stands, what should be the next steps?

2. Establish Workgroups To Determine Strategies And Tactics For Improving Customer Satisfaction

Intuitively, people who are at first exposed to customer satisfaction findings believe that the way forward is to examine individual responses and deal with each of them in turn. When an adverse comment is shown in a customer satisfaction report the usual cry from someone in the audience is “tell me who said that”. Salespeople understandably want to visit customers who have given them a low score and put them right.

However, this is not to be recommended. Firstly, the respondents who have fed back their customer satisfaction scores and comments usually do so in the belief that their responses are confidential. It would be embarrassing to a respondent if they were to criticise a sales representative in confidence and then have to face that person who had learned what was said.

Furthermore, the likelihood is that any failure to adequately serve one company is likely to be evidence that the same failure exists within a wider group of customers that have not been polled in the survey. The way forward is to address systemic issues, not individual or granular ones.

It is an obvious point to make, but improving a customer satisfaction score requires someone to sort out those factors which are pulling the score down. Following up on the earlier example, this would mean improving the speed of response to customer contacts, rectifying aspects of the quality problems, and becoming more innovative. How to do this?

Most customer satisfaction initiatives are seen to be the responsibility of the marketing department – but this must surely be wrong. The solution to improving customer satisfaction should be a companywide responsibility.

It is worth considering setting up workgroups that devise an action plan and ensure that it is followed through. In the example which is being considered, there could be a workgroup dedicated to improving quality, one taking responsibility for customer service, and the other for driving innovation.

Small workgroups of four or five people are more likely to make progress than larger ones where there could be too much debate and too little action. The composition of the workgroup should include someone with a deep understanding of the issue that is under consideration – for example, quality, service or innovation. However, it may be appropriate to include people from other disciplines as they will provide a wider context and offer fresh challenges and ideas that come with an outsider’s view.

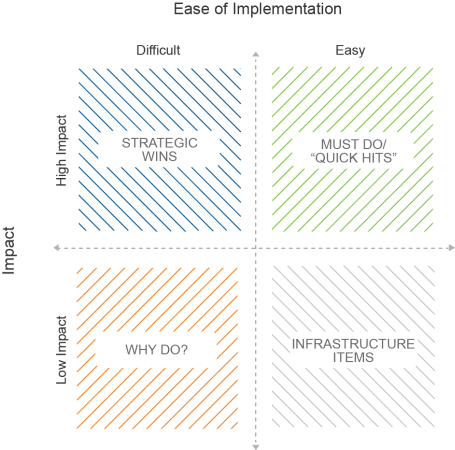

Any plan for improving customer satisfaction must address fundamental issues, some of which may be strategic, or long-term and which require a significant financial investment. However, there are also sure to be issues that can be addressed which cost very little, can be done easily and although their impact could be quite small, they are worthwhile doing in order that everyone feels progress is being made. These can be simple things such as ensuring that everyone has a standard voicemail message on their mobile phone or that everyone has a consistent sign-off at the end of their e-mails. A very good start would be to make a comprehensive list of all the touch points that a customer comes into contact with and identify where most problems occur.

3. Have The CEO Approve The Strategies And Communicate The Importance Of Making Improvements Within A Tight Timescale

Communicating customer satisfaction initiatives throughout a large company is not easy. Firstly, the company may have hundreds if not thousands of employees and it would be a costly exercise and one that would take forever, to make presentations of the findings and action plan to every employee. However, not everyone needs to see the detail or to hear about the actions that are outside their area of responsibility. Dissemination programs can be devised to communicate the right information, to the right groups, in the right way. This could involve cascading presentations to team leaders who in turn inform the people they work with.

One solution adopted by a company implementing an improvement programme was to make a 10 minute film featuring the CEO of the company, who used this opportunity to give his support to the initiative. It included summary presentations from the communications team who were able to clearly state the problems and solutions, and presentations from members of the workgroups outlining what is expected from everyone. The film was shown on the company intranet and on screens placed around areas where people congregate, such as the restaurants and lobby areas.

The involvement of the CEO is crucial. Customer satisfaction is the responsibility of the Board as it is a company-wide philosophy and not the responsibility of any single person or group of people. If the CEO identifies improved customer satisfaction as one of his or her key initiatives, it will be more readily picked up as key initiatives for staff members down the line.

4. Establish Simple Measures To Check That The Program Delivers Improvements

We live in a survey weary world where it is not practicable to return to customers at too frequent intervals to ask for feedback. Indeed, customers are likely to be frustrated if they are constantly pestered to rate customer satisfaction and if they feel that little or few improvements are being made. It can take an inordinate amount of time for improvements to become evident and recognised in the marketplace. This is another reason why some things need doing quickly so that customers can see that a start has been made.

Although the Board may be impatient for a dashboard that frequently delivers customer satisfaction measurements, these may not be possible within a tight, relatively small customer base.

Most customer surveys are carried out annually unless there are a large number of customers from which samples of customers for interview can be taken on a regular basis to measure progress. However, for the majority of business to business companies, measures must be found other than customer satisfaction surveys.

There are many different measures that can be used as a proxy for customer satisfaction. Internal measures related to the subject of improvement will be relevant. For example, if a required improvement is faster deliveries, measurements can be devised that track the speed with which an order is dispatched. If improvements are required in the frequency with which customers are contacted, measurements can be introduced to show how many visits are made to customers, how many phone calls are made, who makes them, the purpose of the call, etc. If the problem is product quality, measures can be taken of the quality as it comes off the production line or leaves the company.

In addition to such obvious measures of improvement, a company could introduce a self policing measurement. The customer service teams could be asked to give their views on the scores that they believe individual customers would award them. If these ratings prove to be overgenerous when finally customers give their views in the annual survey, it would be easy to think of repercussions. Bonuses and personal development programmes could be affected.

In Conclusion

In this White Paper I have explained how to interpret customer satisfaction scores and more importantly how to use these to improve customer satisfaction in four important steps:

- Identify customer satisfaction levels and what is driving them

- Establish workgroups to determine strategies and tactics for improving customer satisfaction

- Have the CEO approve the strategies and communicate the importance of making improvements within a tight timescale

- Establish simple measures to check that the program delivers improvements

A constant improvement in customer satisfaction should be in the mission statement of virtually every company. The benefits are huge. There will be a reduction in customer churn. There will be increased loyalty. And, not least, there will be increased profitability. In work carried out by Anderson, Fornell and Lehmann more than 16 years ago , and never challenged since, they determined that for every one percent per year increase in customer satisfaction over a five year period, there is a cumulative increase of 11.5% in net profitability.

Increasing customer satisfaction is not complicated, but it is hard work – and it is worth it.

Readers of this white paper also viewed:

Customer Satisfaction Surveys & Research: How to Measure CSAT Loyalty – How To Win Devotion From Your Customers Beyond Customer Satisfaction